A Lamport Clock is a single integer counter kept on every node in a distributed system. Every event bumps the counter by one. Every outgoing message carries the counter. On receive, a node sets its counter to max(local, received) + 1. This simple rule guarantees that if event A causally happened before event B then LC(A) < LC(B), even when the underlying wall clocks are skewed. It was introduced by Leslie Lamport in his 1978 paper Time, Clocks, and the Ordering of Events in a Distributed System and is the foundation behind vector clocks, Hybrid Logical Clocks, Cassandra timestamps, Kafka producer epochs, and Raft terms.

Key Takeaways

- A Lamport Clock is a per node counter that ticks on every local event, send, and receive. It captures the happens-before relation across the cluster without needing synchronized wall clocks.

- The receive rule is the whole pattern: `local = max(local, received) + 1`. Everything else is application detail.

- Lamport Clocks give a partial order. To get a total order you append the node id and break ties lexicographically.

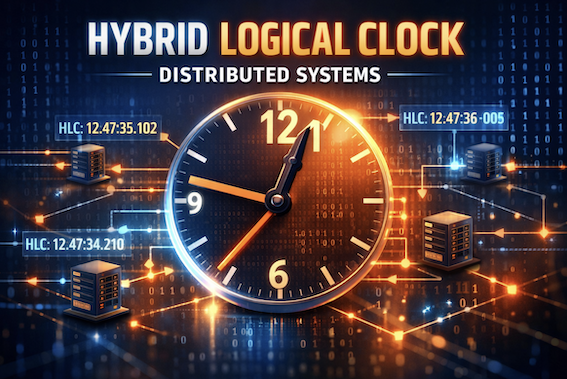

- A Lamport timestamp tells you nothing about wall clock time. It cannot answer 'what happened at 9 AM yesterday' the way an HLC can.

- If A and B are concurrent (neither happens-before the other) you cannot tell from their Lamport timestamps. Vector clocks are needed for that.

- Real systems use Lamport style counters everywhere: Cassandra last write wins timestamps, Kafka producer epoch, Raft term numbers, Paxos ballot ids, and most write-ahead log sequence numbers are all Lamport descendants.

- Lamport Clocks are the building block. Once you understand them, [vector clocks](https://en.wikipedia.org/wiki/Vector_clock) and [Hybrid Logical Clocks](/distributed-systems/hybrid-clock/) fall out as small, focused extensions.

Distributed systems sound like they should be easy. You have a few servers, they pass messages, jobs get done. Then someone asks a simple question. Which event happened first? The order in which user U paid for the cart, the inventory service decremented stock, and the email service sent a confirmation. Three events on three machines. You look at the wall clock timestamps and they disagree by 200 milliseconds. The “send” looks like it happened after the “receive”. You spend a Friday afternoon staring at logs.

That afternoon is exactly what Leslie Lamport had in mind in 1977 when he sketched out a tiny algorithm on a napkin. The algorithm is so simple that you can hold it in your head, and so powerful that almost every distributed database, queue, and consensus protocol you use today is built on top of it. It is called the Lamport Clock, and once you understand it the rest of distributed systems gets a lot less mysterious.

This post walks through what a Lamport Clock is, how the algorithm works, how to extend it to a total order, where it shows up in production systems like Cassandra, Kafka, and Raft, and how it relates to its more famous cousins, the vector clock and the Hybrid Logical Clock.

The Problem: There Is No Global Now

In a single process, ordering events is trivial. The CPU executes one instruction at a time. The program counter is your clock. Done.

The moment you split work across two machines, that simplicity is gone. Each machine has its own clock crystal that drifts a few microseconds per second. Each runs an NTP daemon that periodically nudges the clock forward or backward to stay close to a reference time. Each is subject to virtualization pauses, leap seconds, and the occasional sysadmin who runs date -s on the wrong host.

The result is that when machine A says “this happened at 12:00:00.500” and machine B says “this happened at 12:00:00.498”, you cannot trust which was actually first. The skew is real and it is unavoidable. The classic Riak post on clocks tells the war story of clocks drifting by 30 seconds in production and silently dropping writes.

So we cannot use the wall clock. What we can use is causality. If event A sent a message that triggered event B, then A definitely happened before B, no matter what the wall clocks say. That is the insight Lamport had, and the entire pattern is built around it.

The Happens-Before Relation

Before we can talk about the algorithm, we need one definition. Lamport defined the happens-before relation, written A -> B, on events in a distributed system using three rules.

graph TD

R1["<b>Rule 1: Same Node</b><br/>If A and B happen on the same node<br/>and A came first, then A -> B"]

R2["<b>Rule 2: Send and Receive</b><br/>If A is the send of a message<br/>and B is the matching receive,<br/>then A -> B"]

R3["<b>Rule 3: Transitivity</b><br/>If A -> C and C -> B,<br/>then A -> B"]

R4["<b>Concurrent</b><br/>If neither A -> B nor B -> A,<br/>then A and B are concurrent.<br/>Their order does not matter."]

R1 --> R3

R2 --> R3

R3 --> R4

style R1 fill:#dbeafe,stroke:#1d4ed8,stroke-width:2px

style R2 fill:#dbeafe,stroke:#1d4ed8,stroke-width:2px

style R3 fill:#c8e6c9,stroke:#388e3c,stroke-width:2px

style R4 fill:#fff3e0,stroke:#f57c00,stroke-width:2px

That is it. Three rules. They define a partial order on events because some pairs are unrelated. That is fine. If two events never exchanged messages, their order does not matter for correctness anyway.

Concrete example. Imagine three nodes labeled A, B, C.

sequenceDiagram

participant A as Node A

participant B as Node B

participant C as Node C

Note over A: e1: local event

A->>B: e2: send

Note over B: e3: receive

Note over B: e4: local event

B->>C: e5: send

Note over C: e6: receive

Note over A: e7: local event

Note over C: e8: local event

What can we say about ordering?

e1 -> e2because they are on the same node.e2 -> e3because of send-receive. Soe1 -> e3by transitivity.e3 -> e4 -> e5 -> e6chain across B and C.e1 -> e6is true (you can trace a chain).e7ande8are concurrent with each other. Neither node ever heard from the other in this slice of the diagram.e7ande6are also concurrent.

The happens-before relation is exactly what we want to capture in numbers. That is what the Lamport Clock does.

The Lamport Clock Algorithm

Each node j keeps a single integer LC.j, initially zero. There are three rules that update it. They mirror the three rules of happens-before.

Rule 1: Local Event

Before any local event, increment the counter.

1

LC.j = LC.j + 1

Rule 2: Send

Before sending a message, increment the counter, then attach the new value to the outgoing message.

1

2

LC.j = LC.j + 1

send(message, LC.j)

In practice steps 1 and 2 are usually merged: a “send” is just a kind of local event.

Rule 3: Receive

When you receive a message tagged with sender timestamp t, take the max of your local counter and t, then add one.

1

2

(message, t) = receive()

LC.j = max(LC.j, t) + 1

That is the entire algorithm. Five lines of pseudocode and a single integer per node.

Why It Works

The receive rule is the magic. By taking max you guarantee that the local counter jumps past any value the sender had ever seen. By adding + 1 you guarantee that the receive event is strictly later than the send. So if A -> B (A causally precedes B), then by induction LC(A) < LC(B).

The famous Patterns of Distributed Systems chapter on the Lamport Clock on Martin Fowler’s site reduces the whole thing to a one method tick(requestTime):

1

2

3

4

5

public int tick(int requestTime) {

latestTime = Integer.max(latestTime, requestTime);

latestTime++;

return latestTime;

}

Whether you call it on send, receive, or local event, the math is the same. That is why the pattern is so easy to bolt onto an existing system.

A Worked Example

Three nodes. Each starts at zero. Time flows left to right. Watch the counters.

sequenceDiagram

participant A as Node A<br/>LC=0

participant B as Node B<br/>LC=0

participant C as Node C<br/>LC=0

Note over A: Local event<br/>LC=1

Note over B: Local event<br/>LC=1

A->>B: Send with LC=2<br/>(A bumps to 2)

Note over B: Receive at LC=1<br/>max(1,2)+1 = 3<br/>LC=3

B->>C: Send with LC=4<br/>(B bumps to 4)

Note over C: Receive at LC=0<br/>max(0,4)+1 = 5<br/>LC=5

Note over A: Local event<br/>LC=3

Note over C: Local event<br/>LC=6

Trace any causal chain. e_send_AB = 2 < e_recv_AB = 3 < e_send_BC = 4 < e_recv_BC = 5. The numbers grow strictly along the chain, even though Node C’s wall clock might be 200 milliseconds behind Node A’s. That is the power of the Lamport Clock in one diagram.

Notice also that Node A’s local event at LC = 3 and Node C’s local event at LC = 6 look like A came before C numerically. But these events are concurrent. The Lamport Clock cannot tell you that. It just gives you a number that respects causality when causality exists.

A Reference Implementation

Here is a clean Python implementation. It is short on purpose.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

import threading

class LamportClock:

def __init__(self):

self._lock = threading.Lock()

self._counter = 0

def tick(self):

"""Call before a local event or an outgoing send.

Returns the timestamp to attach to the event."""

with self._lock:

self._counter += 1

return self._counter

def update(self, received):

"""Call when receiving a message with timestamp `received`.

Returns the new local timestamp."""

with self._lock:

self._counter = max(self._counter, received) + 1

return self._counter

def now(self):

with self._lock:

return self._counter

Three things to notice.

- Every update takes a lock. A Lamport Clock is shared mutable state inside a process. If two threads tick at the same time you can violate monotonicity.

tickandupdateare the only writers. Reading without writing is safe but you should still lock for memory visibility on most platforms.- The integer can grow forever. In practice this is fine. A 64 bit counter at one billion ticks per second still lasts roughly 290 years. A 32 bit counter wraps in seconds at high event rates so use 64.

If you want to see a battle tested implementation, look at how CockroachDB encodes the logical part of its HLC. The same monotonic counter trick is in there.

From Partial Order to Total Order

A pure Lamport Clock gives a partial order. Two events on different nodes can land on the same counter value. For some workloads that is fine. For others, like distributed mutual exclusion, you need a strict total order.

The standard fix is to append the node id and compare lexicographically. The pair (counter, node_id) is unique. To compare two timestamps:

1

2

3

4

def compare(a, b):

if a.counter != b.counter:

return a.counter - b.counter

return a.node_id - b.node_id

graph LR

Q["Two Lamport timestamps<br/>same counter value?"]

Q -->|No| C1["Compare counters<br/>Higher counter wins"]

Q -->|Yes| C2["Compare node ids<br/>Higher node id wins"]

C1 --> R["<b>Total order</b><br/>across the cluster"]

C2 --> R

style Q fill:#dbeafe,stroke:#1d4ed8,stroke-width:2px

style C1 fill:#c8e6c9,stroke:#388e3c

style C2 fill:#c8e6c9,stroke:#388e3c

style R fill:#fff3e0,stroke:#f57c00,stroke-width:2px

This (counter, node_id) pair is sometimes called a Lamport tuple or Lamport pair. It still respects happens-before because if A -> B then LC(A) < LC(B) regardless of node id. The node id only matters when the counters tie, and tied counters mean the events are concurrent, so any deterministic tiebreaker is correct.

The original Lamport paper used this exact construction to build a distributed mutual exclusion algorithm. Every node broadcasts a request stamped with its Lamport tuple, and the lowest tuple wins the lock. No central coordinator needed.

What Lamport Clocks Cannot Do

Before celebrating, two important limits.

Limit 1: They Cannot Detect Concurrency

Look at this scenario.

sequenceDiagram

participant A as Node A

participant B as Node B

Note over A: Local event<br/>LC = 1

Note over A: Local event<br/>LC = 2

Note over A: Local event<br/>LC = 3

Note over B: Local event<br/>LC = 1

Note over B: Local event<br/>LC = 2

Note over A,B: No messages exchanged

A’s third event has LC = 3. B’s second event has LC = 2. A “looks” later. But the events are concurrent. They never influenced each other. The Lamport Clock cannot tell the difference between “A truly happened after B” and “they are unrelated and we are guessing”. For workloads that need to detect concurrent writes, like Dynamo style key-value stores, you need a vector clock.

Limit 2: They Have No Wall Clock Meaning

A Lamport timestamp of 4231 tells you nothing about when the event happened. You cannot run a “show me all writes between 9 AM and 10 AM yesterday” query. You cannot use it as an MVCC version that matches a user visible timestamp. That is the gap that the Hybrid Logical Clock fills.

For most ordering and consensus problems, neither limit matters. But knowing when you are about to hit them saves a lot of debugging.

How Lamport Clocks Compare to Other Clocks

Each clock fixes a different problem. Pick the one that matches your need.

graph TD

PROB["What do you need to order events in distributed systems?"]

PROB --> Q1["Just a real time approximation?"]

PROB --> Q2["Causal ordering only?"]

PROB --> Q3["Detect concurrency between events?"]

PROB --> Q4["Causal ordering + readable time?"]

Q1 --> WC["<b>Wall Clock / NTP</b><br/>Simple but breaks under drift<br/>and backward jumps"]

Q2 --> LC["<b>Lamport Clock</b><br/>Single integer counter<br/>Compact, easy to ship<br/>No relation to wall time"]

Q3 --> VC["<b>Vector Clock</b><br/>One counter per node<br/>Detects concurrent writes<br/>Grows with cluster size"]

Q4 --> HLC["<b>Hybrid Logical Clock</b><br/>Wall time + small counter<br/>Causal + readable + 64 bit"]

style PROB fill:#dbeafe,stroke:#1d4ed8,stroke-width:2px

style LC fill:#c8e6c9,stroke:#388e3c,stroke-width:2px

style WC fill:#fff3e0,stroke:#f57c00

style VC fill:#fff3e0,stroke:#f57c00

style HLC fill:#fff3e0,stroke:#f57c00

| Property | Wall Clock | Lamport Clock | Vector Clock | Hybrid Logical Clock |

|---|---|---|---|---|

| Total order | Yes (with ties) | With node id tie-break | No (partial only) | Yes |

| Captures happens-before | No | Yes | Yes | Yes |

| Detects concurrent events | No | No | Yes | No |

| Close to real time | Yes | No | No | Yes |

| Size | 64 bits | 64 bits | O(N) bits | 64 bits |

| Tolerates clock drift | No | N/A | N/A | Yes |

| Used by | Most legacy systems | Cassandra, Kafka, Raft, Paxos | Dynamo, Riak, Voldemort | CockroachDB, MongoDB, YugabyteDB |

If you want a deeper comparison of the time-aware family, the Hybrid Logical Clock post walks through the same trade-offs from the HLC side.

Real World Implementations

Almost every distributed system you use is running a Lamport Clock under a different name. Here are some of the bigger ones.

Apache Cassandra: Last Write Wins Timestamps

Cassandra uses Lamport style timestamps for last write wins (LWW) conflict resolution. Every column write carries a microsecond timestamp from the coordinator node. On read, Cassandra returns the value with the highest timestamp.

The timestamp is normally derived from the coordinator’s wall clock, but the comparison rule is pure Lamport. If two writes for the same key arrive with the same timestamp, the higher value byte order wins as a deterministic tiebreaker, exactly the same trick as appending a node id. The downside, as documented in Aphyr’s Jepsen analysis of Cassandra, is that LWW is fragile when wall clocks drift, which is why HLC based systems are increasingly preferred for new designs.

Apache Kafka: Producer Epoch and Leader Epoch

Kafka uses a Lamport style producer epoch to fence off zombie producers after a transaction restart. Every transactional producer gets a producer id and an epoch number from the transaction coordinator. The epoch is monotonically increased on every transaction restart. Brokers reject any append from a producer whose epoch is older than the latest one they have seen.

Kafka also uses a leader epoch on every partition that follows the same monotonic, max on receive rule. Followers use the leader epoch when truncating their logs after a leader change to avoid losing committed data. The pattern is documented in KIP-101 and underpins everything Kafka does to keep replicas consistent. If you want the bigger picture, our deep dive on how Kafka works traces these epochs through the full write path.

Raft: Term Numbers

In Raft, every leader is elected for a numbered term. Terms increase monotonically. Whenever a node sees a message with a higher term it adopts that term and steps down if it was leader. The rule is identical to a Lamport receive: term = max(local_term, received_term), with the increment happening only on a new election rather than every receive.

This is what makes Raft safe across network partitions. Old leaders that come back online see a higher term in heartbeats and immediately resign. The pattern shows up the same way in Paxos ballot numbers and in ZAB, the protocol behind ZooKeeper.

Write-Ahead Logs and Sequence Numbers

Almost every write-ahead log stamps entries with a monotonically increasing sequence number that is, in spirit, a Lamport counter. PostgreSQL’s LSN, MySQL’s GTID, MongoDB’s optime, etcd’s Raft log index, Kafka’s offset. They are all single integer counters that tick on every write and travel with the data when it replicates. The receive rule is implicit because followers always apply entries in order.

When you understand the Lamport Clock, you stop seeing each of these as a separate trick and start seeing them as the same idea wearing different hats.

Other Notable Implementations

| System | Where Lamport style ordering shows up | Notes |

|---|---|---|

| Cassandra | Cell timestamps for LWW | Microsecond resolution, byte order tie-break |

| Kafka | Producer epoch, leader epoch, log offsets | Monotonic per partition |

| Raft | Term numbers, log indices | max on receive, step down on higher term |

| Paxos | Ballot numbers | Same monotonic counter pattern |

| ZooKeeper / ZAB | zxid (epoch + counter) | Lamport tuple in disguise |

| PostgreSQL | LSN | Monotonic byte position in WAL |

| Spanner / TrueTime | Commit timestamps | Hybrid of wall clock + Lamport-style increments |

A Worked Example: Distributed Mutex

Lamport’s original 1978 paper used Lamport Clocks to build a fully distributed mutual exclusion algorithm. There is no central lock manager. Every node decides for itself whether it holds the lock, and they all agree. Here is the sketch.

sequenceDiagram

participant A as Node A<br/>LC=5

participant B as Node B<br/>LC=3

participant C as Node C<br/>LC=4

Note over A: Wants the lock<br/>LC=6, request (6, A)

A->>B: REQUEST (6, A)

A->>C: REQUEST (6, A)

Note over B: Wants the lock<br/>LC=4, request (4, B)

B->>A: REQUEST (4, B)

B->>C: REQUEST (4, B)

Note over A: Sees (4, B) is lower<br/>Sends ACK

A->>B: ACK

Note over C: ACKs both

C->>A: ACK

C->>B: ACK

Note over B: Has all ACKs and<br/>holds lowest tuple<br/><i class='fas fa-lock'></i> Enters critical section

The rule is simple. A node wanting the lock broadcasts REQUEST(LC, node_id). Other nodes reply with ACK. A node enters the critical section when it has received an ACK from every other node and its own REQUEST is the lowest (counter, node_id) tuple in the global queue. When done, it broadcasts RELEASE.

Because every event uses the Lamport tuple total order, every node agrees on which request came “first”. No coordinator. No locks. No leader election. Just (counter, node_id) pairs and a few message types.

This is a beautiful demonstration of how much you can build on top of one tiny algorithm. Production systems usually use simpler designs (a Raft leader, a database row lock) because the message complexity is high, but the Lamport mutex is still required reading for any distributed systems engineer.

How Lamport Clocks Connect to Other Patterns

Lamport Clocks are the seed. Once you have them, a whole family of distributed systems patterns becomes natural.

- A vector clock is one Lamport counter per node, packed in a vector.

- A Hybrid Logical Clock is a Lamport counter plus a wall clock anchor.

- A Write-Ahead Log sequence number is a Lamport counter scoped to one log.

- A Replicated Log relies on monotonic indices that follow Lamport’s receive rule across followers.

- Paxos ballot numbers and Raft terms are Lamport tuples in production code.

- The High Watermark and Low Watermark are pointers into a Lamport ordered log.

- Heartbeats carry counters that double as a clock health signal.

If you have not read the transactional outbox pattern or the two-phase commit posts, those show how these primitives compose into reliable workflows.

Failure Modes and How to Handle Them

The algorithm is robust but there are a few sharp edges in production.

Lost Messages Do Not Break the Clock

If a message is dropped, the receiver simply never bumps its counter for that send. The happens-before relation across the dropped pair is lost, but the rest of the system is unaffected. Lamport Clocks degrade gracefully.

Out of Order Messages

Network reordering is fine because the receive rule uses max. A late message with a small timestamp does not pull the counter backward.

Counter Overflow

A 64 bit counter is essentially unbounded. A 32 bit counter at 10K events per second wraps in about five days, so always use 64 in production. If you ever truly worry about overflow, periodic snapshots of the counter and modular arithmetic with epoch numbers is the answer (this is how Raft layers terms over indices).

Skew Between Counters Across Nodes

Lamport counters drift apart between nodes that talk infrequently. That is by design. Drift only matters if you compare counters across nodes that have never communicated, which is exactly the case where the comparison is meaningless anyway.

Misuse As a Wall Clock

The most common production mistake is treating a Lamport counter as if it were a real timestamp. It is not. A counter of 4231 does not mean “January 4th”. If you find yourself wanting to ask “what events happened in this hour”, you want a Hybrid Logical Clock or a separate wall clock column. Use the right tool.

Related tool: Epoch Timestamp Converter. Convert Lamport timestamps and wall-clock values when you debug distributed event ordering.

Key Takeaways for Developers

-

Memorize the receive rule.

local = max(local, received) + 1. That single line is the entire pattern. Everything else is application detail. -

Use a Lamport tuple for total order. When you need a deterministic single ordering across the cluster, append the node id and compare lexicographically. Two lines of code, full total order.

-

Do not confuse counter values with wall time. A Lamport counter has no calendar meaning. If your product team wants “show me events from yesterday”, reach for a Hybrid Logical Clock or a separate timestamp column.

-

Reach for vector clocks when you need to detect concurrent writes. If your system has to merge concurrent updates (Dynamo style), Lamport is not enough. Vector clocks are the right tool.

-

You are already using Lamport Clocks. Kafka leader epochs, Raft terms, Cassandra timestamps, Postgres LSNs are all the same idea. Once you see it, you cannot unsee it.

-

Pair Lamport Clocks with heartbeats. Heartbeats give you liveness. Lamport Clocks give you ordering. Together they cover the two hardest problems in a distributed system.

Wrapping Up

Time in distributed systems is one of those topics that looks intimidating until you see the trick. Wall clocks lie. Atomic clocks are expensive. We need an ordering, but we cannot trust the obvious one.

Leslie Lamport’s 1978 insight was that we do not need a real clock. We need an algorithm that respects causality. A counter that increments on every event and jumps forward on every receive is enough. The math is short. The properties are deep. The pattern is hiding inside almost every distributed system you have ever used.

Once Lamport Clocks click, vector clocks fall out as a small extension, Hybrid Logical Clocks fall out as a clever combination, and the consensus protocols you use start to look like elaborate scaffolding around the same monotonic counter.

Next time you debug an “event B looks like it happened before A” puzzle, the first question to ask is: what does the Lamport timestamp say? You may save yourself a Friday afternoon.

For more distributed systems patterns, check out Hybrid Logical Clock, High Watermark, Low Watermark, Write-Ahead Log, Replicated Log, Majority Quorum, Paxos, Heartbeat, Lease, Gossip Dissemination, Two-Phase Commit, and How Kafka Works.

Further reading: Lamport’s original 1978 paper Time, Clocks, and the Ordering of Events in a Distributed System; the Lamport Clock chapter in Unmesh Joshi’s Patterns of Distributed Systems on Martin Fowler’s site; Kevin Sookocheff’s Lamport Clocks post; the Baeldung CS article on the Lamport Clock; and the Wikipedia entry on Lamport timestamps for a quick reference.